It would just start learning how to approximate the answers, but it will never converge to a state where it learns the rule of addition.ĬhatGPT is good at math and can understand the logic. Should be doable right! Now think of how you would have to learn the weights of a model with 1.000.000 parameters to do the exact same, using a large dataset and a NN optimizer.

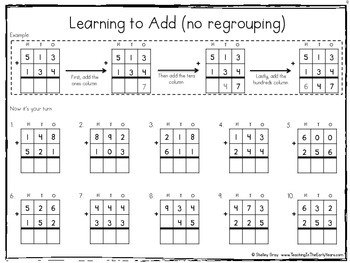

They are multiplying and adding up inputs, weights and biases, and they are not designed to learn 'if, else' logic.Īn additional train of thought which i very much like is the following: Think of how precise a super small multi-layer perception would have to be to implement simple 2 digit addition (given 4 inputs). Neural networks cannot learn rules simply because they are not designed to do so. Addition is a simple 'rule', but it cannot comprehend this rule. These AIs learn their data well, but they cannot extrapolate strict 'rules' from the data. They throw it an endless amount of data for simple addition, but throw it a slightly higher number that it has never seen before, and it cannot solve it.

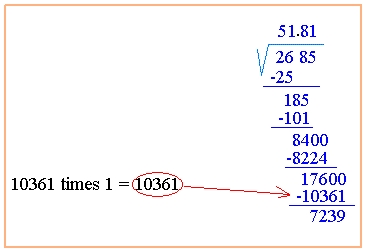

One of the best examples are papers that try to make mathematics AIs. However, throw it a slightly different mathematical problem it has not seen before, and it fails to answer spectacularly easy questions. The problem is that the model learns everything present in the data. On a high level, your question is very philosophical. The problem comes down to the age-old problem of learning vs understanding. Why is ChatGPT bad at math, while it is very good at other stuff? (Check out my heavily related answer to a similar question here) That is because they do have that understanding that chatGPT has not. The difference is that you can explain to humans where their quick-and-dirty answer goes wrong. What is noteworthy is that many humans make the same 5/5/5 100/100/100 error. It's not so much that chatGPT is intelligent, but more that we are less so that we think. 'Well-formed' and 'fitting' do suggest to humans reliability and intelligence strongly, but that is more because we humans judge quickly and superficially. More data and training will not help, it will become even more 'well-formed' and 'fitting', but not more reliable. But 'well-formed' and 'fitting' do not equate 'reliability' nor 'trustworthiness'. It is the phone's autocompletion on steroids. You can see how many words have a bit of a statistical likelihood relation backwards time for, for a, time -> quick, quick dinner, with us, with -> at all.ĬhatGPT is fundamentally the same but much better than what the phone can do. "I am going on a trip with my friends to get to the full house for the weekend so we will need a time for a quick dinner with us at all and we can go over and there for the weekend if we want you guys out there" Choose the most likely words for a message and the sentence that results is something like (just started and chose a stream of 'middle options'): On the phone that is not of a very high quality. You type on your screen and the phone gives you three words that you would statistically use most often after the previous one. In the most simple variant, every smartphone already has that mechanism. It has rules extracted from its data, but those are not rules of understanding, but rules of 'likely phrases'. Why does this model make such simple logical mistakes?ĬhatGPT is able to create well-formed sentences which contain phrases that are fitting for the input.

Why is this AI so bad at math? Computers are generally supposed to be good at math. but concludes in the same response that it's then 500 minutes. ChatGPT first makes the intuitive mistake of 100, that a human might make as well, and then goes on to (correctly, as far as I understand) say it's 5 minutes. Now, unless I'm misunderstanding something here, the answer is 5 minutes. Therefore, it would take a total of 500 minutes for 100 machines to make 100 devices. How long would it take 100 machines to make 100 devices?ĬhatGPT: If it takes a single machine 5 minutes to make a single device, then it would take 100 machines 5 minutes to make 100 devices. Mithical: It takes a machine 5 minutes to make a device. Can you please provide more information or clarify your question? I may have misunderstood the scenario you described. Check carefully and try again.ĬhatGPT: I apologize if my response was not correct. Mithical: If it takes 5 machines 5 minutes to make 5 devices, how long would it take 100 machines to make 100 devices?ĬhatGPT: If it takes 5 machines 5 minutes to make 5 devices, then it would take 100 machines 100 minutes to make 100 devices. For instance, I asked it "If it takes 5 machines 5 minutes to make 5 devices, how long would it take 100 machines to make 100 devices?" As opposed to How does ChatGPT know math?, I've been seeing some things floating around the Twitterverse about how ChatGPT can actually be very bad at math.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed